Claude Mythos vs Other Frontier AI Models: Security Capability in Context

Claude Mythos Preview’s security capabilities are remarkable — but how do they compare to what other frontier AI models can do? And what does the competitive landscape in AI security capability look like? This post contextualises Mythos within the broader frontier AI environment.

What We Know About Other Models’ Security Capabilities

Anthropic’s technical disclosure provides unusually specific benchmark data — numbers that allow direct comparison between Mythos Preview and predecessor Claude models. What it does not provide is equivalent comparison data for other frontier models from OpenAI, Google, Meta, or Mistral. This absence is significant: the AI industry does not have a universal security capability benchmark that all frontier labs publicly report against.

What we can reasonably infer: the capability improvements that produced Mythos’s security capability — better code understanding, deeper reasoning, more reliable autonomous task completion — are likely present to varying degrees in other frontier models released around the same time. GPT-4o, Gemini 1.5 Ultra, and LLaMA 3.1 405B are all frontier models that may have significant security capabilities that have not been publicly benchmarked in the same way Anthropic has done with Mythos.

The Transparency Gap

Anthropic’s transparency is the exception, not the rule

Anthropic’s decision to publish detailed benchmark data about Mythos Preview’s security capabilities — including specific exploit counts, crash severity distributions, and the autonomous accessibility of these capabilities to non-experts — is an unusual level of transparency for the AI industry. Most frontier model releases do not include equivalent security capability disclosures. The result: we know what Mythos can do at a specific, measurable level; we do not have equivalent public data for other frontier models.

What other models’ capabilities might look like

Based on published benchmark performance and general model capability assessments: other frontier models likely have significant security capabilities, possibly at levels comparable to Mythos Preview or approaching it. The specific capability that Mythos demonstrates — autonomous exploit development from vulnerability discovery — requires the combination of code understanding, reasoning depth, and autonomy that characterises frontier models generally. The specific capability levels are unknown without equivalent public benchmarking.

The risk of undisclosed capability

If other frontier models have significant security capabilities that have not been publicly disclosed — either because they have not been evaluated or because the results are not being shared — the security industry and policymakers lack the information needed to respond appropriately. Anthropic’s Mythos disclosure implicitly highlights this risk: if Anthropic had not evaluated and disclosed Mythos’s capabilities, the same capabilities would exist but the defensive response — Project Glasswing, the industry warning — would not.

What Industry-Wide Security Capability Benchmarking Would Look Like

The case for shared benchmarks

The security research community has well-established benchmarks for human vulnerability research — CTF (Capture the Flag) competitions, CVE severity ratings, bug bounty programme payouts. An equivalent benchmark for AI security capability — a standardised set of test cases that all frontier AI labs would evaluate their models against and report publicly — would provide the visibility into the AI security capability landscape that currently does not exist. The Anthropic internal benchmark (OSS-Fuzz corpus, five-tier crash severity scale) could serve as the basis for such a standardised benchmark.

The precedents from other dual-use technology

Dual-use technology sectors — cryptography, certain chemical and biological research domains — have developed voluntary and mandatory sharing frameworks for safety-relevant research. The Wassenaar Arrangement governs export controls on dual-use conventional weapons and export control lists that affect certain cybersecurity tools. AI security capability may eventually be subject to similar frameworks. The Anthropic Mythos disclosure is contributing to the conversation about what such frameworks should look like for AI.

The immediate practical implication

For businesses and policymakers: the absence of equivalent public security capability data for other frontier models does not mean those models lack significant security capabilities. It means the information is not available to inform defensive responses. The appropriate response is to treat the Mythos disclosure as a signal that the frontier AI security capability landscape is changing rapidly — not just at Anthropic — and to invest in defensive security practices accordingly, regardless of which specific models will be used by potential adversaries.

Should Anthropic’s competitors match their security disclosure?

From a public interest perspective: yes. The security industry, policymakers, and the public would benefit from equivalent public security capability benchmarking from all frontier AI developers — so that the full capability landscape is visible and the defensive response can be calibrated accordingly. Whether this happens through voluntary action, industry standards, or regulatory requirement is a governance question that the Mythos announcement makes more urgent.

Does Mythos’s capability mean Anthropic is ahead of other labs?

The Mythos disclosure demonstrates that Anthropic has frontier security capability at a well-documented level. Whether other frontier labs are ahead, behind, or comparable in security capability is genuinely unknown without equivalent public disclosure. Anthropic’s transparency about Mythos is notable — but the transparency itself does not mean they are uniquely ahead in capability. It means they are uniquely transparent about the capability they have.

Want to Build on the Most Capable and Transparently Developed AI Platform?

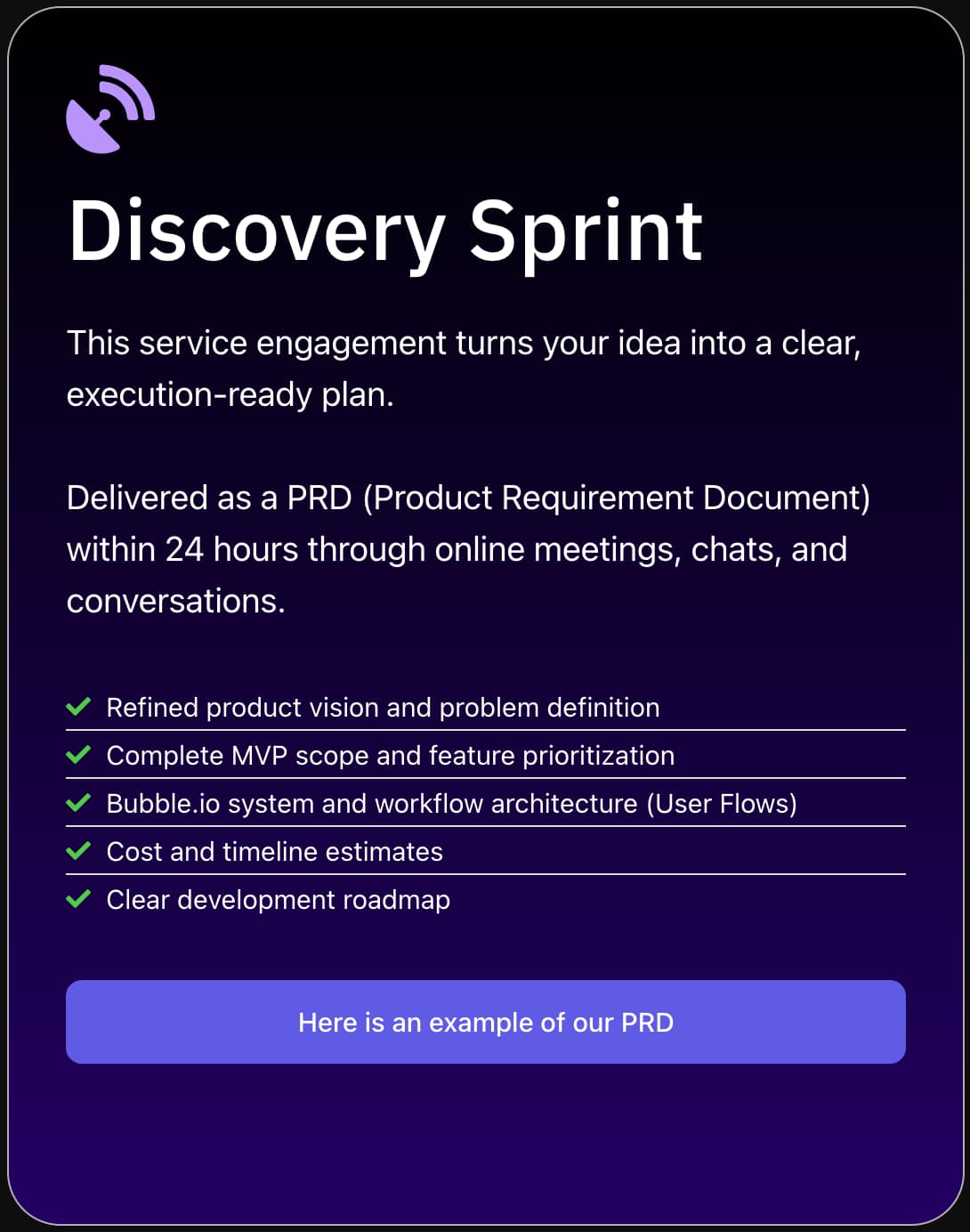

SA Solutions integrates Claude — Anthropic’s AI with industry-leading transparency about capability and safety — into Bubble.io applications and Make.com automations.