How to Get Your Team Using AI in 30 Days

The biggest AI implementation failure is not a technical one. It is the AI tool that gets purchased, demonstrated once, and then never used consistently. Team adoption is harder than team purchase and harder than team training. This is the 30-day plan that actually changes habits.

Why Most AI Training Fails

The typical AI training session: a 90-minute workshop where someone demonstrates Claude, the team is impressed, everyone leaves with good intentions, and two weeks later 80% of the team has reverted to their pre-AI workflow. Not because the training was bad — because training without workflow integration produces knowledge without habit.

The problem is not capability — most professionals can learn to use Claude in an afternoon. The problem is the daily decision point: when the pressure of real work arrives, the team reverts to the workflow they already know. The antidote to this is not more training; it is embedding AI into the existing workflow so the old approach requires more effort than the new one.

The 30-Day Adoption Programme

Week 1: Preparation and personalisation

Before any team training: map the specific tasks each team member performs most frequently (the time audit from Post 235, applied per role). For each role, identify the top 3 tasks that AI can accelerate most. Build the role-specific prompt library (Post 316) — 5 to 7 prompts for each role, each ready to use for the most common tasks. The account manager gets proposal drafting prompts, client update prompts, and objection handling analysis prompts. The finance person gets reconciliation analysis prompts, management accounts narrative prompts, and invoice drafting prompts. Each person starts with prompts that are directly useful for their actual work on day one.

Week 2: Role-specific training and first use

The training session — maximum 90 minutes per role group (account managers together, operations together, finance together). Cover: what Claude can do that is relevant to this role specifically, the 5 to 7 prompts from the role-specific library with live examples using real work from this week, the common failure modes to avoid (vague prompts, over-relying on AI output without review, using AI for tasks that genuinely require human judgment), and the one AI habit to start this week. The one habit: every team member selects their highest-frequency task and commits to using their Claude prompt for it every time it occurs in the next 7 days. One habit, one week.

Week 3: Integration and expansion

Check-in with every team member: which tasks are they using AI for, what is working well, what outputs are not good enough yet, what would make the prompts better? For any prompt that is not producing useful outputs: refine together in 10 minutes. Add one new AI task for each person: the highest-frequency task not yet AI-assisted. By the end of week 3: each team member is using AI for at least 2 tasks consistently. The manager makes a visible point of asking about AI use in team check-ins — normalising AI use as an expectation rather than an optional extra.

Week 4: Measurement and institutionalisation

Measure adoption: which team members are using AI consistently (3 or more times per week), which are using it occasionally (1 to 2 times), and which are not using it at all. Address the non-adopters individually — the barrier is almost always either a specific workflow that is not covered by the prompt library (build the prompt together) or a concern about AI quality (review specific examples together). Calculate the team’s collective time saving in week 4 vs the week before training. Share the number with the team: the visibility of collective impact reinforces adoption. Institutionalise: AI use becomes part of the weekly team review, the monthly performance review, and the onboarding process for new team members.

What if some team members resist AI adoption?

Resistance to AI adoption typically comes from one of three sources: fear that AI will replace their job (address with honest conversation about how AI changes roles rather than eliminates them), scepticism about AI quality (address by showing specific examples where AI output saved time and met quality standards), or genuine workflow mismatch (address by building the specific prompt that makes AI useful for this person’s actual work). Resistance that persists after all three are addressed is a management issue — AI use is a professional expectation, not an optional extra.

How do I measure team AI adoption?

The metrics that reflect genuine adoption rather than theoretical capability: frequency of AI tool use per team member per week (target: 3 or more times weekly by week 4), percentage of the team’s top 5 tasks that have an AI workflow (target: 3 of 5 by month 2), and time saved per team member per week compared to the pre-training baseline (target: 1 or more hours by month 1, 3 or more hours by month 3). Self-reported data is directional; observed usage data (available in some tool admin panels) is more reliable.

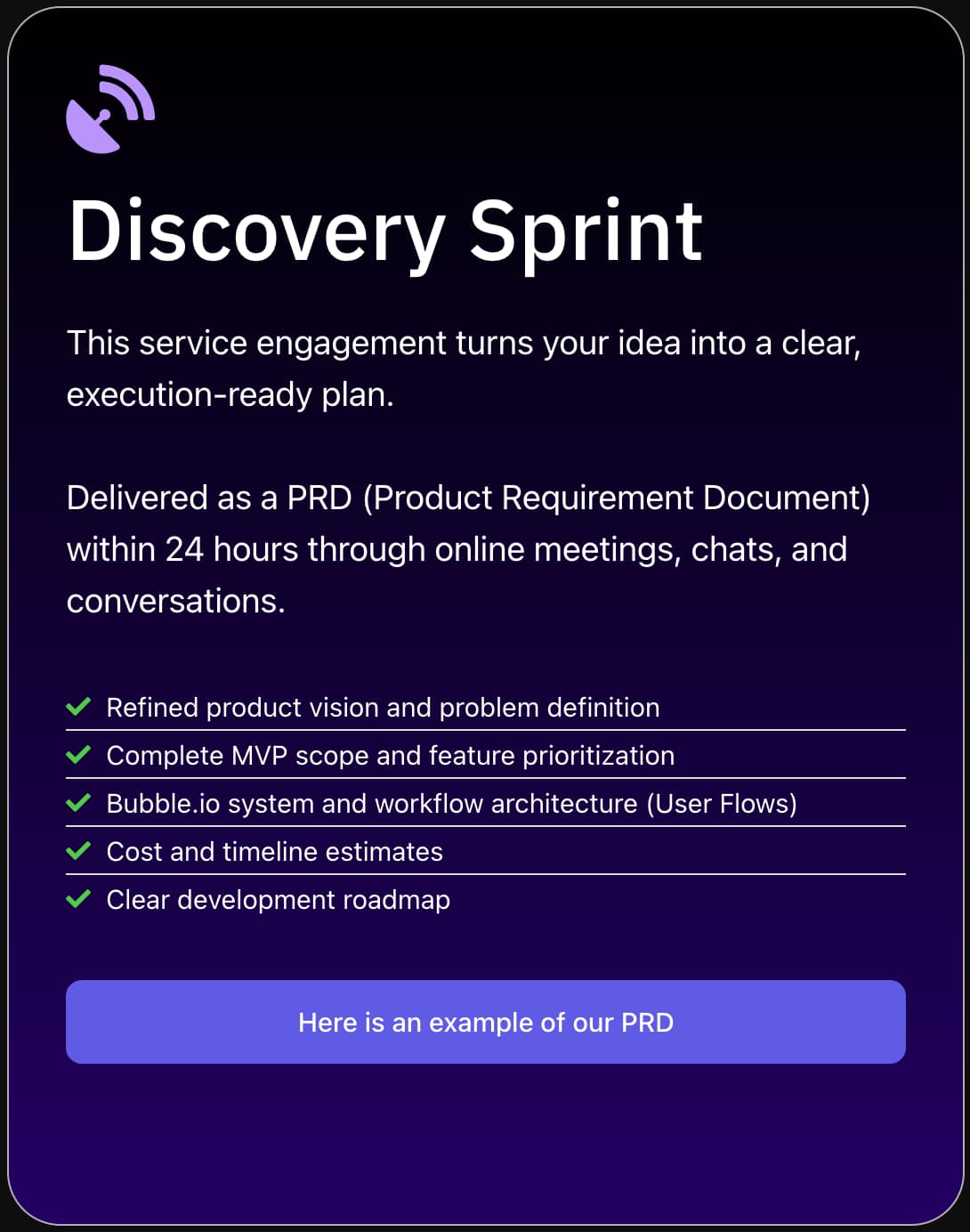

Want a Team AI Adoption Programme Delivered?

SA Solutions delivers role-specific AI training, prompt library development, workflow integration, and adoption measurement for teams of 3 to 50 people.