Responsible AI Release: How Anthropic’s Mythos Announcement Sets a New Standard

The way Anthropic released Claude Mythos Preview — limited initial access, technical transparency, coordinated defensive deployment, industry-wide communication — is as significant as the model itself. This post examines what responsible release looks like at the frontier and what the broader AI industry should take from it.

The Four Components of Responsible Release

Comprehensive capability evaluation before release

Anthropic tested Mythos Preview against real security benchmarks — actual open source codebases, actual browser vulnerabilities — before announcing the model. This evaluation discovered the security capability that made a standard broad release inappropriate. The principle: thorough capability evaluation before release, not after. This requires investment in evaluation infrastructure (benchmarks, test environments, evaluation expertise) and a willingness to delay release when concerning capabilities are discovered — which has real commercial cost.

Technical transparency about concerning findings

Anthropic published detailed technical information about what Mythos Preview can do — including the specific exploits it constructed, the benchmarks it achieved, and the comparison to prior models. This transparency serves the security community: it allows researchers and defenders to understand the capability level of what is coming, calibrate their own defensive investments accordingly, and contribute to the coordinated defensive response. The alternative — releasing the model without this transparency — would have left the security community unprepared for a capability they would eventually encounter.

Coordinated defensive deployment before broad access

Project Glasswing — deploying Mythos Preview’s capabilities to vetted defensive partners before broad release — is the most operationally demanding component of the responsible release framework. It requires: identifying and vetting appropriate partners, building the deployment and monitoring infrastructure, managing coordinated vulnerability disclosure for the findings, and maintaining the limited-access model while the defensive work proceeds. This has real costs. It is also, Anthropic clearly believes, the right approach.

What This Means for AI Industry Norms

The evaluation standard

Anthropic’s approach implies that frontier AI models should be evaluated comprehensively for security capabilities — not just for the capabilities the model is designed to have, but for the full range of capabilities that may have emerged from general improvement. This is a higher evaluation bar than many current industry practices. Establishing this as a standard — through Anthropic’s example and through regulatory or industry guidance — would mean that every frontier model release includes a security capability evaluation comparable to what Anthropic conducted for Mythos.

The transparency norm

Publishing technical details of concerning capabilities, including specific benchmark results and the nature of what the model can do, is a transparency norm that the Mythos announcement exemplifies. This is not universal in the AI industry — some releases provide very limited technical detail about capabilities, positive or concerning. The argument for transparency: the security community, policymakers, and the public can only respond appropriately to AI capability advances if they understand what those advances are. Anthropic’s disclosure enables a more informed, effective industry response.

The responsible deployment sequence

The Mythos release sequence — evaluate, discover concerning capabilities, engage defensive partners, deploy defensively, disclose technically, then proceed toward broader access — is a template for responsible deployment of frontier models with dual-use capabilities. It is more complex, more costly, and slower than standard product release. It is also more aligned with the public interest in a safe transitional period. The industry norm question is whether this sequence becomes standard or remains exceptional.

📌 Anthropic’s description of the transitional period as potentially 'tumultuous regardless' even with responsible release practices is an honest acknowledgement that no release approach eliminates the risk during transition — it only manages it. The goal of the responsible release framework is not to prevent all harm but to ensure that the defensive deployment proceeds faster than the offensive capability diffuses, and that the industry is as prepared as possible when models with similar capabilities become broadly available.

Does this approach slow down beneficial AI development?

There is a genuine tension between moving quickly to deploy beneficial AI and taking the time for thorough evaluation and responsible release. Anthropic’s approach accepts some commercial cost — delayed broad access, significant investment in Project Glasswing — in exchange for a safer transitional period. Whether other frontier labs adopt similar approaches or prioritise speed will be one of the defining questions for AI safety during this period. Regulation may eventually require similar evaluation and disclosure practices, reducing the competitive disadvantage of responsible release approaches.

What can smaller AI labs and businesses building on AI learn from this?

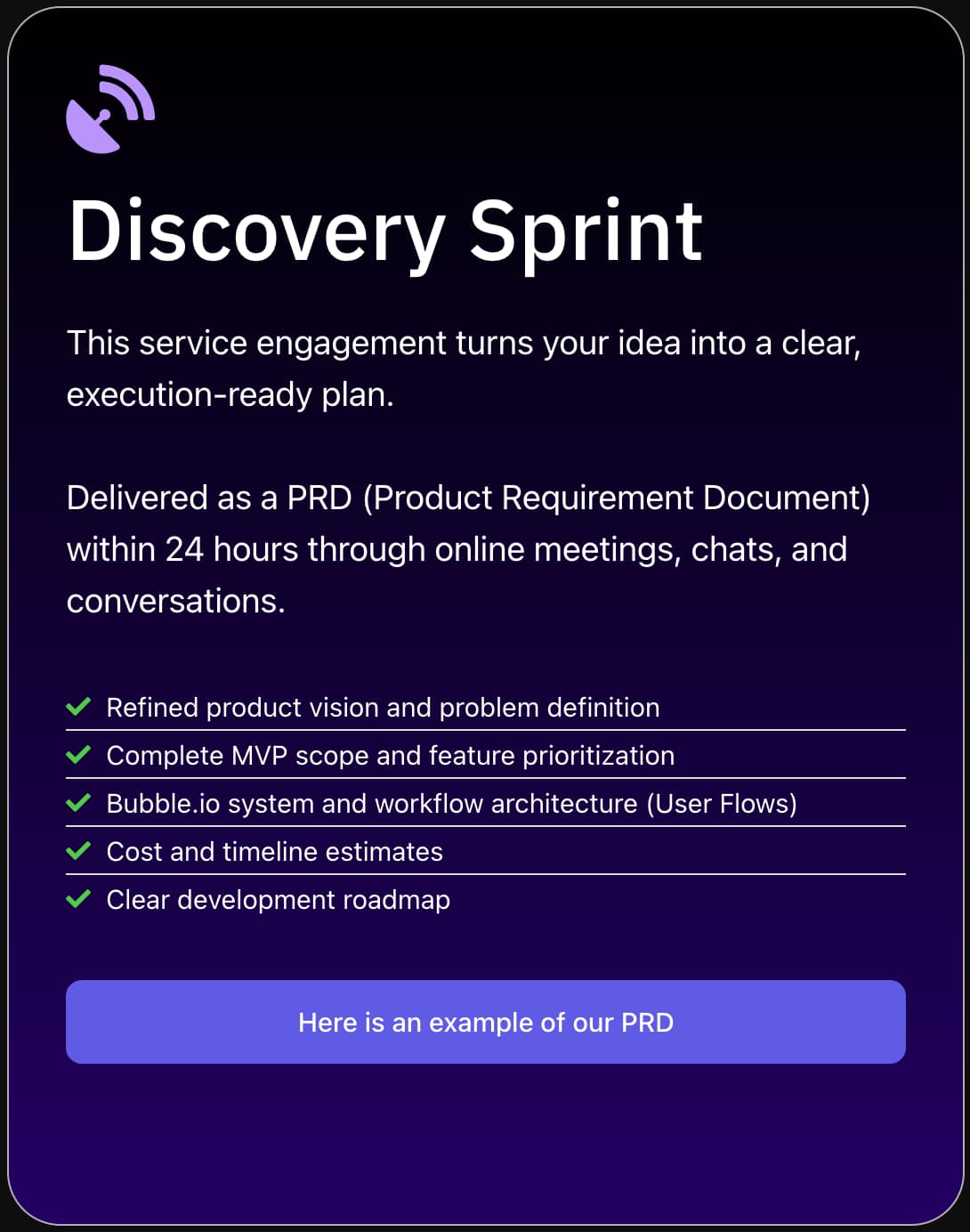

The responsible release principles Anthropic demonstrates scale down to smaller contexts: evaluate AI tools for unintended capabilities before broad deployment, be transparent about limitations and risks with users and stakeholders, and build governance frameworks that can identify and respond to concerning uses. For businesses building on Claude or other AI APIs — including via Bubble.io and Make.com — this means implementing appropriate safeguards, monitoring for unexpected uses, and being transparent with users about AI capabilities and limitations in your product.

Want to Build AI Applications with Responsible Practices?

SA Solutions builds AI-powered business tools with appropriate governance — human oversight, transparent AI disclosure, and security best practices built into every implementation.

Build AI Responsibly with SA SolutionsOur AI Integration Services